Abstract

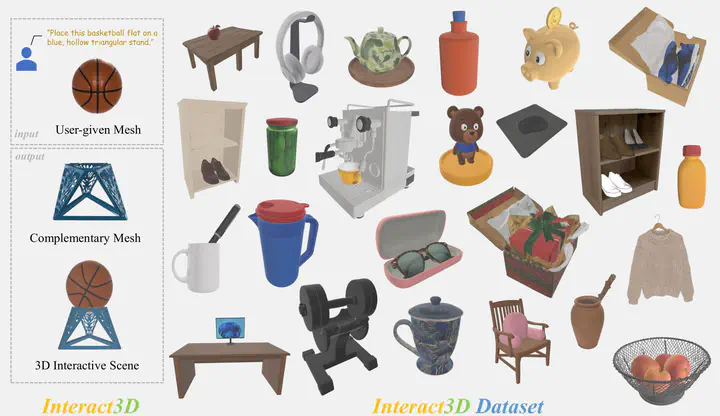

We present Interact3D, a framework for generating physically plausible, interactive 3D compositional objects. Given a user-provided mesh and a text prompt describing a complementary object and their spatial relationship, Interact3D synthesizes a high-fidelity complementary 3D asset and physically composes the two objects into a coherent 3D interactive scene. Our two-stage pipeline combines global-to-local geometric registration with SDF-based optimization to prevent intersections, and incorporates a VLM-driven refinement module that autonomously self-corrects generated assets through iterative multi-view analysis, producing collision-aware compositions with improved geometric fidelity.

Type

Publication

arXiv, 2026